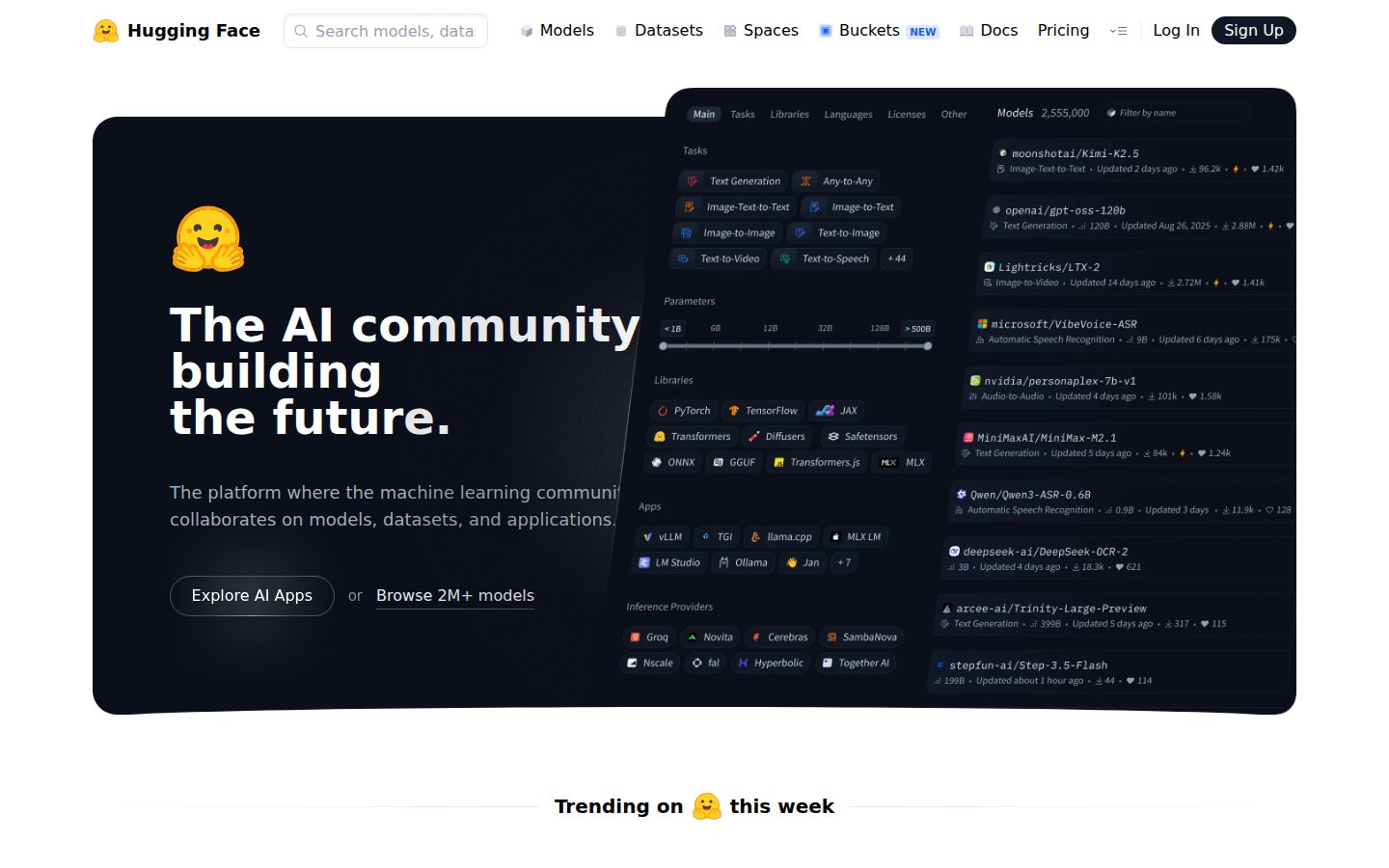

Hugging Face is a platform and open-source ecosystem designed for building, sharing, and deploying machine learning models. It operates as a model repository and collaboration hub, often compared to GitHub in its role within the AI community. Users can browse and download from over 500,000 publicly available models and 100,000 datasets contributed by researchers, companies, and individual developers worldwide.

At its core, Hugging Face provides a suite of open-source Python libraries, most notably the Transformers library, which offers standardized interfaces for working with state-of-the-art models for natural language processing, computer vision, audio, and multimodal tasks. Additional libraries such as Datasets, Diffusers, and PEFT extend this ecosystem to cover data loading, image generation, and parameter-efficient fine-tuning respectively.

The platform offers Spaces, a feature that allows users to host and share interactive machine learning demos built with frameworks like Gradio or Streamlit. This makes it straightforward for practitioners to showcase model capabilities without requiring users to run code locally. Organizations can use private repositories and team management features to collaborate internally.

Hugging Face targets a broad audience ranging from academic researchers and independent developers to enterprise engineering teams. Its free tier provides access to the core repository and community features, while paid plans add private storage, dedicated inference endpoints, and enterprise security controls. Managed inference APIs allow developers to call hosted models directly via HTTP without managing infrastructure.

In the AI tooling market, Hugging Face occupies a central position as a neutral, community-driven alternative to proprietary model providers. Its combination of open-source libraries, a large public model hub, and optional managed infrastructure has made it a widely adopted resource across both research and production machine learning workflows.