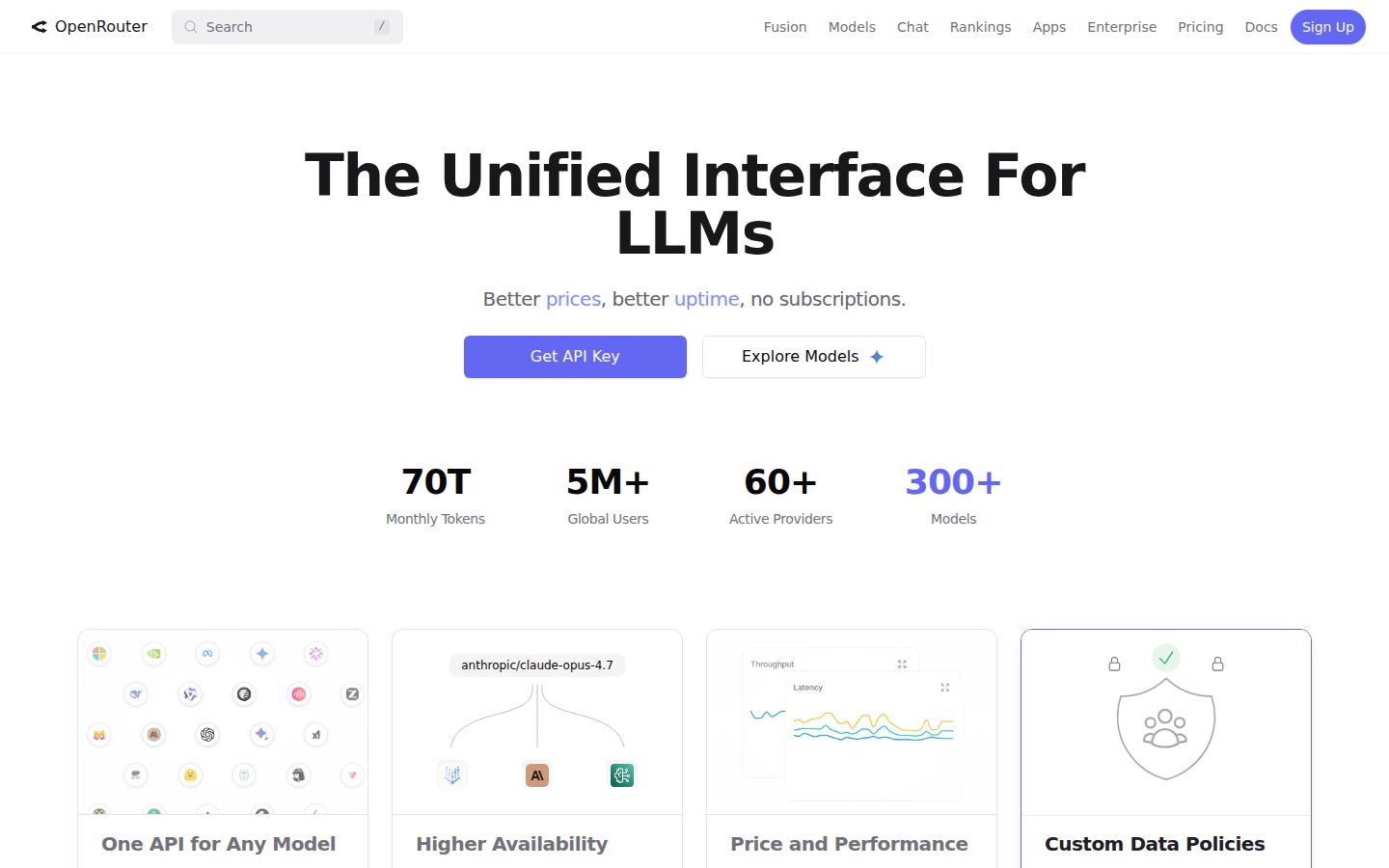

OpenRouter is an API aggregation service that provides developers with unified access to a wide range of AI models from various providers through a single, standardized interface. The platform eliminates the need to manage multiple API keys and different integration protocols by offering a consistent OpenAI-compatible API format.

The service supports models from major AI providers including OpenAI, Anthropic, Google, Cohere, Meta, and many others, allowing users to access GPT models, Claude, Gemini, LLaMA, and numerous open-source alternatives. Developers can easily switch between models or test different options without rewriting their applications, as OpenRouter maintains API compatibility across all supported models.

OpenRouter targets developers, businesses, and researchers who want flexibility in their AI model selection without the complexity of managing multiple provider relationships. The platform offers features like model routing based on performance or cost preferences, usage analytics, and simplified billing across multiple AI services.

The service operates in the growing AI API marketplace where businesses seek to avoid vendor lock-in and want the ability to optimize their AI usage based on specific requirements like cost, performance, or model capabilities. OpenRouter positions itself as a middleware solution that provides choice and flexibility in an increasingly diverse AI model ecosystem.